Deploying a Production-Ready AWS Infrastructure with Terraform: A Complete Guide

Building a secure, scalable containerized application on AWS using ECS Fargate, RDS PostgreSQL, and GitHub Actions CI/CD

I'm Zin Lin Htet. Who love to learn and share about Linux, Cloud, Docker and K8s. Currently working as a DevOps Engineer at one of the famous Fintech Company in Myanmar.

Introduction

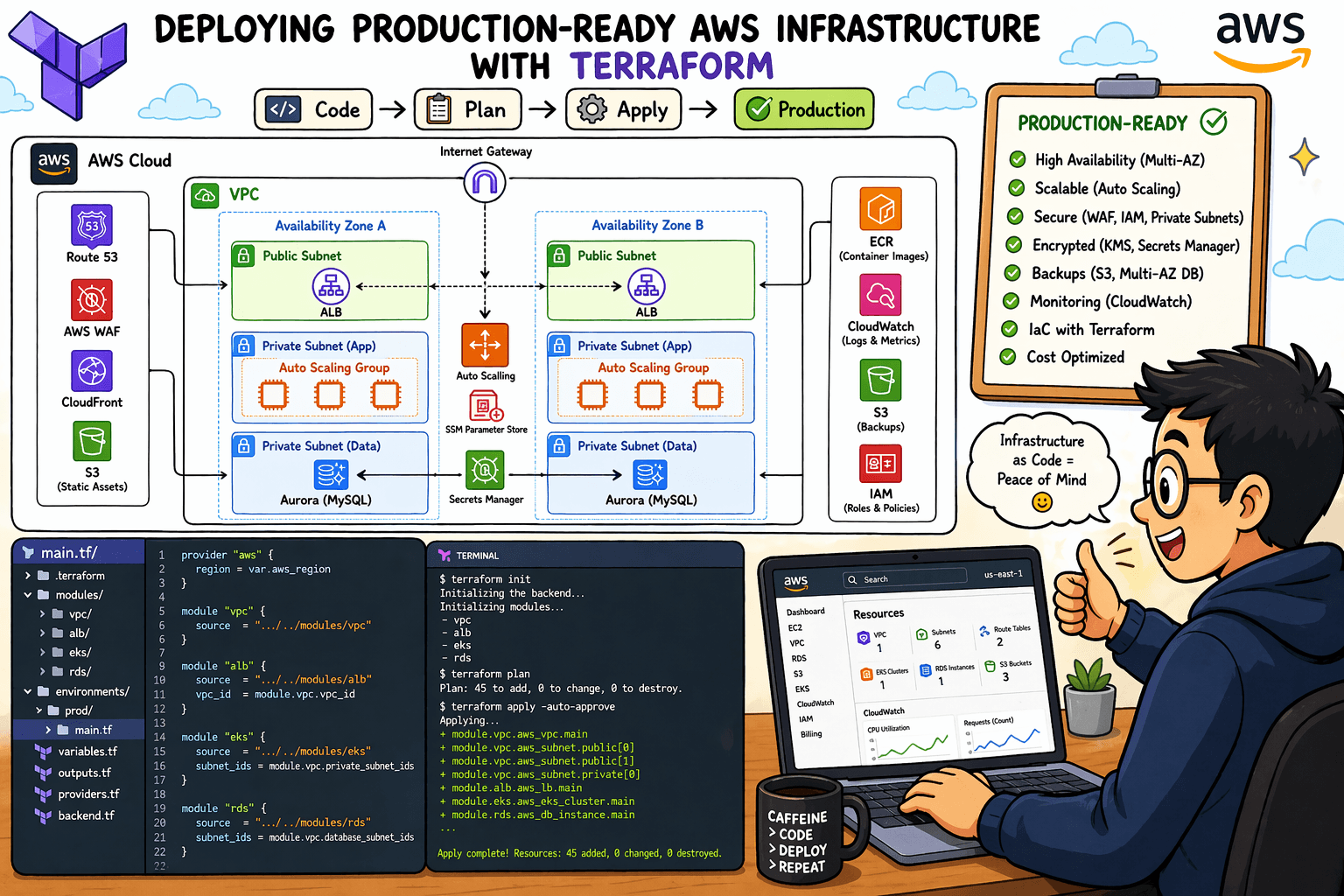

Deploying cloud infrastructure can be overwhelming. Between networking, security, databases, and CI/CD pipelines, there are countless moving parts to coordinate. In this guide, I'll walk you through a complete, production-oriented AWS infrastructure deployment using Terraform — from VPC design to automated container deployments via GitHub Actions OIDC.

Whether you're building your first cloud application or refining your infrastructure-as-code skills, this guide covers everything you need to deploy a containerized demo application with enterprise-grade security practices.

What We're Building

Our architecture follows AWS Well-Architected Framework principles with a focus on security, cost optimization, and operational excellence.

Technology Stack

| Layer | Technology | Purpose |

|---|---|---|

| IaC | Terraform | Infrastructure provisioning |

| Compute | ECS Fargate | Serverless container orchestration |

| Database | RDS PostgreSQL 16 | Managed relational database |

| Load Balancing | Application Load Balancer | HTTP/HTTPS traffic distribution |

| Registry | Amazon ECR | Docker image storage |

| CI/CD | GitHub Actions + OIDC | Automated build and deployment |

| Secrets | AWS Secrets Manager | Credential management |

| Networking | VPC + PrivateLink | Secure network isolation |

Project Structure

Our Terraform configuration is organized into 10 focused files:

demo-todo-app-ecs/

├── vpc.tf # Network foundation

├── alb.tf # Load balancer and target groups

├── rds.tf # PostgreSQL database

├── security-group.tf # Firewall rules

├── ecr.tf # Container registry

├── endpoint.tf # Private AWS connectivity

├── iam-role.tf # Task permissions

├── oidc.tf # GitHub Actions authentication

├── ecs.tf # Container orchestration

└── outputs.tf # Deployment information

Part 1: Networking with VPC

Every AWS deployment starts with the network. Our VPC design follows the principle of defense in depth — public resources face the internet, everything else stays private.

Key Design Decisions

| Decision | Implementation | Rationale |

|---|---|---|

| Multi-AZ deployment | 2 public + 2 private subnets | High availability across availability zones |

| No public IPs on private resources | map_public_ip_on_launch = false |

Prevents accidental internet exposure |

| DNS resolution | enable_dns_hostnames = true |

Required for ECS service discovery |

The Terraform Configuration

resource "aws_vpc" "vpc" {

cidr_block = var.cidr_block

enable_dns_hostnames = var.dns_hostnames

enable_dns_support = var.dns_support

tags = merge(local.common_tags, {

Name = "${var.vpc_name}"

})

}

resource "aws_subnet" "pub_subnets" {

vpc_id = aws_vpc.vpc.id

count = length(var.vpc_pub_subnets)

cidr_block = var.vpc_pub_subnets[count.index]

availability_zone = var.azs[count.index]

map_public_ip_on_launch = var.map_public

}

resource "aws_subnet" "priv_subnets" {

vpc_id = aws_vpc.vpc.id

count = length(var.vpc_priv_subnets)

cidr_block = var.vpc_priv_subnets[count.index]

availability_zone = var.azs[count.index]

map_public_ip_on_launch = false # ECS and RDS — no direct internet

}

Why This Matters

Placing ECS tasks and RDS in private subnets means:

❌ No direct internet access to your application containers

❌ No brute-force SSH attempts on your database

✅ All traffic flows through controlled security groups

Part 2: Security Groups — Layered Defense

Security groups are your cloud firewall. Instead of using CIDR blocks everywhere, we use security group references — allowing traffic only from known sources.

The Layered Model

Internet ──> ALBSG(80/443) ──> ECSSG(3000) ──> RDSSG(5432)

│

VPC Endpoint SG (443)

Implementation

resource "aws_security_group" "alb_sg" {

vpc_id = aws_vpc.vpc.id

name = var.alb_sg

description = "Allow HTTP Connection to ALB"

ingress {

from_port = 80

to_port = 80

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

description = "Allow HTTP Connection to ALB"

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = merge(local.common_tags, {

Name = "${var.alb_sg}"

})

}

resource "aws_security_group" "backend_ecs_sg" {

vpc_id = aws_vpc.vpc.id

name = var.backend_ecs_sg

description = "Allow Backend ECS Connection from ALB"

ingress {

from_port = 3000

to_port = 3000

protocol = "tcp"

security_groups = [aws_security_group.alb_sg.id]

description = "Allow Backend ECS Connection from ALB"

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = merge(local.common_tags, {

Name = "${var.backend_ecs_sg}"

})

}

resource "aws_security_group" "rds_sg" {

vpc_id = aws_vpc.vpc.id

name = var.rds_sg

description = "Allow RDS Connection from Backend ECS"

ingress {

from_port = 5432

to_port = 5432

protocol = "tcp"

security_groups = [aws_security_group.backend_ecs_sg.id]

description = "Allow RDS Connection from Backend ECS"

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = merge(local.common_tags, {

Name = "${var.rds_sg}"

})

}

resource "aws_security_group" "vpc_endpoint_sg" {

vpc_id = aws_vpc.vpc.id

name = var.endpoint_sg

description = "Allow ECS tasks to reach VPC Endpoints"

ingress {

from_port = 443

to_port = 443

protocol = "tcp"

security_groups = [aws_security_group.backend_ecs_sg.id]

description = "Allow HTTPS from Backend ECS"

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = merge(local.common_tags, {

Name = "${var.endpoint_sg}"

})

}

Security Best Practices Applied

| Practice | Implementation |

|---|---|

| Principle of least privilege | Each SG only opens required ports |

| No CIDR-based internal trust | SG references prevent IP spoofing |

| Description on every rule | Self-documenting firewall rules |

Part 3: Database with RDS PostgreSQL

Our database layer prioritizes security by default — encryption, private subnets, and no hardcoded passwords.

Key Features

resource "aws_db_subnet_group" "rds_priv" {

name = var.rds_priv

subnet_ids = aws_subnet.priv_subnets[*].id

tags = merge(local.common_tags, {

Name = "${var.rds_priv}"

})

}

resource "aws_db_parameter_group" "rds_parameter" {

name = "demo-sms-db-parameter-group"

family = "postgres16"

description = "Performance tuned parameters for PostgreSQL 16"

parameter {

name = "max_connections"

value = "100"

apply_method = "pending-reboot"

}

parameter {

name = "work_mem"

value = "4096"

apply_method = "immediate"

}

parameter {

name = "effective_io_concurrency"

value = "32"

apply_method = "immediate"

}

parameter {

name = "autovacuum_vacuum_scale_factor"

value = "0.05"

apply_method = "immediate"

}

parameter {

name = "autovacuum_vacuum_cost_limit"

value = "200"

apply_method = "immediate"

}

parameter {

name = "log_min_duration_statement"

value = "2000"

apply_method = "immediate"

}

lifecycle {

create_before_destroy = true

}

tags = merge(local.common_tags, {

Name = "demo-sms-db-parameter-group"

})

}

resource "aws_db_instance" "rds" {

identifier = var.rds_name

db_name = var.db_name

engine = var.engine

engine_version = var.engine_version

instance_class = var.db_class

username = var.user

manage_master_user_password = true

storage_encrypted = var.encrypt

storage_type = var.storage_type

allocated_storage = var.storage

max_allocated_storage = var.max

db_subnet_group_name = aws_db_subnet_group.rds_priv.name

parameter_group_name = aws_db_parameter_group.rds_parameter.name

vpc_security_group_ids = [aws_security_group.rds_sg.id]

multi_az = var.multi

publicly_accessible = var.public

skip_final_snapshot = var.skip

final_snapshot_identifier = var.final

apply_immediately = var.apply

tags = merge(local.common_tags, {

Name = "${var.rds_name}"

})

}

data "aws_secretsmanager_secret" "rds_password" {

arn = aws_db_instance.rds.master_user_secret[0].secret_arn

}

The manage_master_user_password Revolution

Before this feature, we had two bad options:

Hardcode passwords in Terraform (terrible for security)

Use random_password resource and store in Secrets Manager (complex)

Now, AWS handles it automatically:

🔐 Generates a cryptographically secure password

🗝️ Stores it in Secrets Manager with automatic rotation

🔑 ECS tasks retrieve it at runtime via IAM permissions

Secrets Injection in ECS

{

"secrets": [

{

"name": "DB_PASSWORD",

"valueFrom": "arn:aws:secretsmanager:...:secret:...:password::"

},

{

"name": "DB_USER",

"valueFrom": "arn:aws:secretsmanager:...:secret:...:username::"

}

]

}

Your application never sees a hardcoded credential. Even if the container is compromised, the secret remains in Secrets Manager.

Part 4: VPC Endpoints — Private AWS Connectivity

Without VPC endpoints, ECS tasks in private subnets need a NAT Gateway to reach AWS services like ECR and CloudWatch Logs. NAT Gateways cost ~$0.045/hour plus data processing fees.

Our Endpoint Strategy

We use AWS PrivateLink via VPC endpoints to keep traffic inside the AWS network:

| Endpoint | Type | Purpose |

|---|---|---|

ecr.dkr |

Interface | Docker image pulls |

ecr.api |

Interface | ECR API operations |

logs |

Interface | CloudWatch Logs streaming |

secretsmanager |

Interface | Database credential retrieval |

ssmmessages |

Interface | ECS Exec |

s3 |

Gateway | S3 access (via route table, no ENI) |

Terraform Implementation

locals {

services = ["ecr.dkr", "ecr.api", "logs", "secretsmanager", "ssmmessages"]

}

resource "aws_vpc_endpoint" "demo_interfaces" {

for_each = toset(local.services)

vpc_id = aws_vpc.vpc.id

service_name = "com.amazonaws.ap-southeast-1.${each.value}"

vpc_endpoint_type = var.endpoint_type_1

private_dns_enabled = var.dns_enable

subnet_ids = aws_subnet.priv_subnets[*].id

security_group_ids = [aws_security_group.vpc_endpoint_sg.id]

tags = merge(local.common_tags, {

Name = "demo-todo-endpoint-${each.value}"

})

}

resource "aws_vpc_endpoint" "demo_s3_gw" {

vpc_id = aws_vpc.vpc.id

service_name = "com.amazonaws.ap-southeast-1.s3"

vpc_endpoint_type = var.endpoint_type_2

route_table_ids = [aws_route_table.priv_rtb.id]

tags = merge(local.common_tags, {

Name = "demo-todo-endpoint-s3"

})

}

Cost Impact

| Scenario | Monthly Cost (ap-southeast-1) |

|---|---|

| NAT Gateway (2 AZs) | ~$65 + data processing |

| VPC Endpoints (6 interfaces) | ~$50 + data processing |

| Hybrid (endpoints + 1 NAT) | ~$35 + data processing |

For our demo, endpoints eliminate most NAT Gateway traffic. In production, you might keep one NAT for external API calls.

Part 5: ECS Fargate — Serverless Containers

ECS Fargate lets us run containers without managing servers. We define what we need, AWS handles the rest.

Task Definition

resource "aws_ecs_task_definition" "backend" {

family = "demo-todo-backend"

network_mode = "awsvpc"

requires_compatibilities = ["FARGATE"]

cpu = 256

memory = 512

execution_role_arn = aws_iam_role.ecs_execution_role.arn

task_role_arn = aws_iam_role.ecs_task_role.arn

runtime_platform {

operating_system_family = "LINUX"

cpu_architecture = "X86_64"

}

container_definitions = jsonencode([

{

name = "demo-todo-bk-api"

image = "${aws_ecr_repository.demo_ecr.repository_url}:latest"

essential = true

portMappings = [{

containerPort = 3000

hostPort = 3000

protocol = "tcp"

}]

# Pulling secrets directly from RDS Managed Secret

secrets = [

{

name = "DB_PASSWORD"

valueFrom = "${aws_db_instance.rds.master_user_secret[0].secret_arn}:password::"

},

{

name = "DB_USER"

valueFrom = "${aws_db_instance.rds.master_user_secret[0].secret_arn}:username::"

}

]

environment = [

{ name = "DB_HOST", value = aws_db_instance.rds.address },

{ name = "DB_NAME", value = var.db_name },

{ name = "DB_PORT", value = "5432" }

]

logConfiguration = {

logDriver = "awslogs"

options = {

"awslogs-group" = aws_cloudwatch_log_group.ecs_logs.name

"awslogs-region" = "ap-southeast-1"

"awslogs-stream-prefix" = "ecs"

"awslogs-create-group" = "true"

}

}

}

])

lifecycle {

ignore_changes = [

container_definitions

]

}

}

Service with Auto-Scaling

resource "aws_ecs_service" "backend_service" {

name = "demo-todo-service"

cluster = aws_ecs_cluster.main.id

task_definition = aws_ecs_task_definition.backend.arn

desired_count = 2

launch_type = "FARGATE"

enable_execute_command = true # For debugging: aws ecs execute-command

deployment_circuit_breaker {

enable = true

rollback = true # Auto-rollback on failed deployment

}

network_configuration {

subnets = aws_subnet.priv_subnets[*].id

security_groups = [aws_security_group.backend_ecs_sg.id]

assign_public_ip = false # Security: No public IP

}

load_balancer {

target_group_arn = aws_lb_target_group.ecs_tg.arn

container_name = "demo-todo-bk-api"

container_port = 3000

}

lifecycle {

ignore_changes = [desired_count] # Let auto-scaler manage this

}

}

Auto-Scaling Configuration

resource "aws_appautoscaling_target" "ecs_target" {

max_capacity = 4

min_capacity = 2

resource_id = "service/\({aws_ecs_cluster.main.name}/\){aws_ecs_service.backend_service.name}"

scalable_dimension = "ecs:service:DesiredCount"

service_namespace = "ecs"

}

resource "aws_appautoscaling_policy" "ecs_policy_memory" {

name = "memory-base-autoscaling"

policy_type = "TargetTrackingScaling"

resource_id = aws_appautoscaling_target.ecs_target.resource_id

scalable_dimension = aws_appautoscaling_target.ecs_target.scalable_dimension

service_namespace = aws_appautoscaling_target.ecs_target.service_namespace

target_tracking_scaling_policy_configuration {

predefined_metric_specification {

predefined_metric_type = "ECSServiceAverageMemoryUtilization"

}

target_value = 70.0

scale_in_cooldown = 300

scale_out_cooldown = 60

}

}

Key Features Explained

| Feature | Purpose |

|---|---|

enable_execute_command |

Allows aws ecs execute-command for debugging without SSH |

deployment_circuit_breaker |

Automatically rolls back failed deployments |

ignore_changes [desired_count] |

Prevents Terraform from fighting the auto-scaler |

assign_public_ip = false |

Ensures tasks stay private |

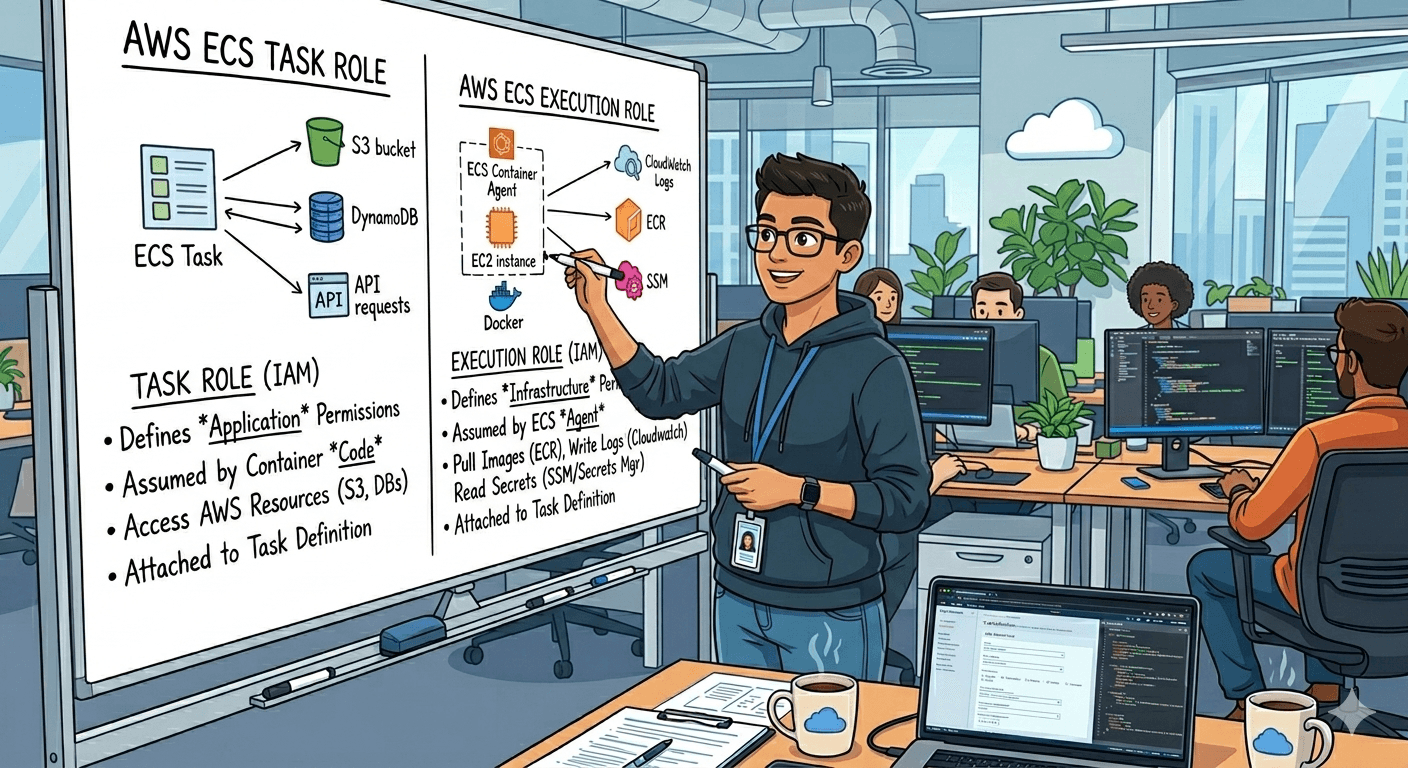

Part 6: IAM Roles — Least Privilege

We separate concerns into two roles:

ECS Execution Role

Used by the ECS agent to pull images and stream logs:

resource "aws_iam_role" "ecs_execution_role" {

name = "demo-todo-execution-role"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = { Service = "ecs-tasks.amazonaws.com" }

}]

})

}

resource "aws_iam_role_policy_attachment" "ecs_execution_standard" {

role = aws_iam_role.ecs_execution_role.name

policy_arn = "arn:aws:iam::aws:policy/service-role/AmazonECSTaskExecutionRolePolicy"

}

resource "aws_iam_role_policy" "ecs_execution_secrets" {

name = "demo-todo-execution-secrets-policy"

role = aws_iam_role.ecs_execution_role.id

policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Effect = "Allow"

Action = ["secretsmanager:GetSecretValue"]

Resource = [aws_db_instance.rds.master_user_secret[0].secret_arn]

}]

})

}

ECS Task Role

Used by the application container for runtime permissions:

resource "aws_iam_role" "ecs_task_role" {

name = "demo-todo-task-role"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = { Service = "ecs-tasks.amazonaws.com" }

}]

})

}

resource "aws_iam_role_policy" "ecs_exec_policy" {

name = "ecs-exec-permissions"

role = aws_iam_role.ecs_task_role.id

policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Effect = "Allow"

Action = [

"ssmmessages:CreateControlChannel",

"ssmmessages:CreateDataChannel",

"ssmmessages:OpenControlChannel",

"ssmmessages:OpenDataChannel"

]

Resource = "*"

}]

})

}

Why Two Roles?

| Role | Used By | Permissions |

|---|---|---|

| Execution Role | ECS Agent (Docker daemon) | ECR pull, CloudWatch logs, Secrets Manager read |

| Task Role | Your application code | SSM Exec, S3, DynamoDB, etc. |

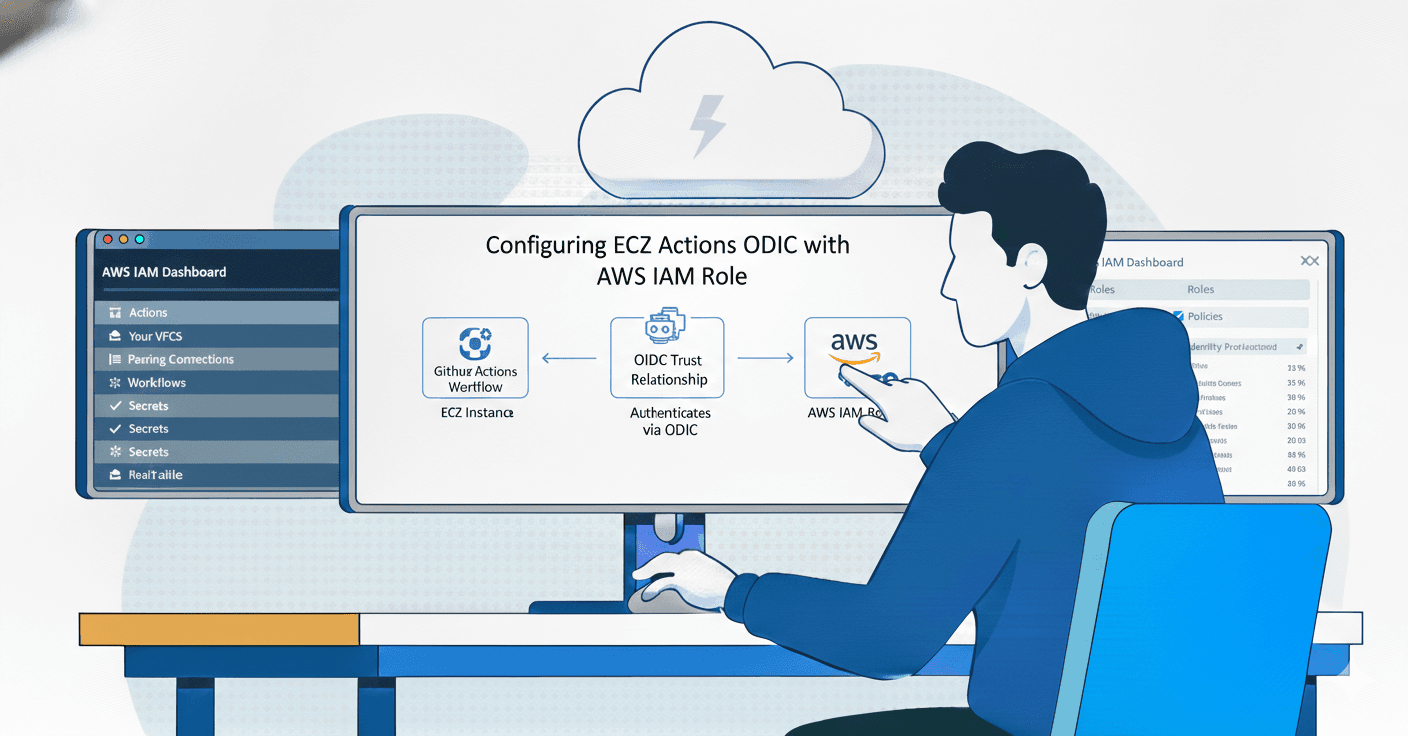

Part 7: GitHub Actions CI/CD with OIDC

Our CI/CD pipeline uses OpenID Connect (OIDC) to authenticate with AWS — no long-lived access keys required.

How OIDC Works

GitHub Actions ──> GitHub OIDC Provider ──> AWS STS ──>

Temporary Credential (1 Hour)

The Terraform OIDC Setup

resource "aws_iam_openid_connect_provider" "github" {

url = "https://token.actions.githubusercontent.com"

client_id_list = ["sts.amazonaws.com"]

thumbprint_list = ["1c58a3a8518e8759bf075b76b750d4f2df264fcd"]

}

resource "aws_iam_role" "demo-todo-github_actions_role" {

name = "demo-todo-github-oidc-role"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Effect = "Allow"

Principal = {

Federated = aws_iam_openid_connect_provider.github.arn

}

Action = "sts:AssumeRoleWithWebIdentity"

Condition = {

StringLike = {

"token.actions.githubusercontent.com:sub" = "repo:your-org/demo-todo-api:*"

}

StringEquals = {

"token.actions.githubusercontent.com:aud" = "sts.amazonaws.com"

}

}

}]

})

}

The GitHub Actions Workflow

name: CI/CD for Demo TODO API

on:

push:

branches: [ "devops" ]

permissions:

id-token: write

contents: read

jobs:

build-and-push:

runs-on: ubuntu-latest

outputs:

image_tag: ${{ steps.vars.outputs.short_sha }}

registry: ${{ steps.login-ecr.outputs.registry }}

steps:

- name: Checkout Code

uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '24'

cache: 'npm'

- run: npm install

- run: npm test --if-present

- name: Configure AWS Credentials

uses: aws-actions/configure-aws-credentials@v4

with:

role-to-assume: ${{ secrets.AWS_OIDC_ROLE }}

aws-region: ap-southeast-1

- name: Login to Amazon ECR

id: login-ecr

uses: aws-actions/amazon-ecr-login@v2

- name: Set Short SHA

id: vars

run: echo "short_sha=\((echo \)GITHUB_SHA | cut -c1-7)" >> $GITHUB_OUTPUT

- name: Build and Push to ECR

env:

ECR_REGISTRY: ${{ steps.login-ecr.outputs.registry }}

ECR_REPOSITORY: ${{ secrets.ECR_REPOSITORY }}

run: |

docker build -t \(ECR_REGISTRY/\)ECR_REPOSITORY:${{ steps.vars.outputs.short_sha }} .

docker push \(ECR_REGISTRY/\)ECR_REPOSITORY:${{ steps.vars.outputs.short_sha }}

deploy:

needs: build-and-push

runs-on: ubuntu-latest

steps:

- name: Configure AWS Credentials

uses: aws-actions/configure-aws-credentials@v4

with:

role-to-assume: ${{ secrets.AWS_OIDC_ROLE }}

aws-region: ap-southeast-1

- name: Check ECR Scan Results

run: |

FINDINGS=$(aws ecr describe-image-scan-findings \

--repository-name ${{ secrets.ECR_REPOSITORY }} \

--image-id imageTag=${{ needs.build-and-push.outputs.image_tag }} \

--query 'imageScanFindings.findingSeverityCounts.CRITICAL' --output text)

if [ "\(FINDINGS" != "None" ] && [ "\)FINDINGS" != "0" ]; then

echo "Critical vulnerabilities found: $FINDINGS"

exit 1

fi

- name: Update Task Definition

env:

IMAGE_URI: \({{ needs.build-and-push.outputs.registry }}/\){{ secrets.ECR_REPOSITORY }}:${{ needs.build-and-push.outputs.image_tag }}

run: |

# 1. Fetch current task definition

aws ecs describe-task-definition \

--task-definition demo-todo-backend \

--query taskDefinition > task-def.json

# 2. Inject the correct Image URI

cat task-def.json | jq --arg IMAGE "$IMAGE_URI" \

'.containerDefinitions[0].image = $IMAGE | del(.taskDefinitionArn, .revision, .status, .requiresAttributes, .compatibilities, .registeredAt, .registeredBy)' \

> new-task-def.json

# 3. Register and Update

NEW_TASK_DEF=$(aws ecs register-task-definition --cli-input-json file://new-task-def.json)

REVISION=\((echo \)NEW_TASK_DEF | jq -r '.taskDefinition.revision')

aws ecs update-service \

--cluster demo-todo-cluster \

--service demo-todo-service \

--task-definition demo-todo-backend:${REVISION}

Security Features in CI/CD

| Feature | Implementation | Benefit |

|---|---|---|

| OIDC Authentication | role-to-assume with no access keys |

No secrets to leak or rotate |

| Immutable Image Tags | Short Git SHA | Traceable, prevents cache issues |

| ECR Scan Gate | Critical vulnerability check | Blocks vulnerable deployments |

| Task Definition Immutability | New revision per deployment | Rollback capability |

| Circuit Breaker | ECS auto-rollback | Failed deployments self-heal |

Part 8: Cost Optimization

Monthly Cost Estimate (ap-southeast-1, demo workload)

| Resource | Specification | Monthly Cost |

|---|---|---|

| ALB | 1 instance | ~$20 |

| ECS Fargate | 2 tasks × 256 CPU / 512 MB | ~$15 |

| RDS PostgreSQL | db.t3.micro, single AZ | ~$15 |

| VPC Endpoints | 6 interface + 1 gateway | ~$50 |

| NAT Gateway | 1 shared | ~$35 |

| ECR | ~10 images | ~$1 |

| CloudWatch Logs | 7-day retention | ~$5 |

| Data Transfer | Minimal | ~$5 |

| Total | ~$145/month |

Cost Reduction Strategies

| Strategy | Savings |

|---|---|

| Use Fargate Spot for dev | ~70% on compute |

| Remove NAT Gateway (endpoints only) | ~$35/month |

| Single AZ for non-prod | ~$15/month |

| ECR lifecycle policy (10 images) | Prevents storage bloat |

| Auto-scaling min=1 for dev | ~50% on compute |

Part 9: Lessons Learned & Best Practices

Always Use Immutable Image Tags [ :latest causes deployment headaches. Use Git SHA or semantic versioning. ]

Separate Execution and Task Roles [ Don't give your application the permissions needed to pull images. ]

VPC Endpoints Save Money and Improve Security [ NAT Gateway data processing fees add up. Endpoints keep traffic internal. ]

Enable Circuit Breakers [ Failed deployments should roll back automatically. Don't wake up at 3 AM. ]

Use manage_master_user_password [ Let AWS handle database credentials. Your future self will thank you. ]

Tag Everything [ Consistent tags enable cost allocation, automation, and cleanup. ]

locals {

common_tags = {

Project = "demo-todo"

Managedby = "Terraform"

Owner = "DevOps Team"

Environment = "UAT"

}

}

Conclusion

This infrastructure demonstrates that security and simplicity aren't mutually exclusive. By leveraging Terraform's declarative nature, AWS managed services, and modern CI/CD practices, we've built a system that:

✅ Has no hardcoded credentials

✅ Runs in private subnets with no direct internet exposure

✅ Auto-scales based on demand

✅ Deploys automatically via GitHub Actions

✅ Self-heals from failed deployments

✅ Costs under $150/month for a demo environment

The complete code is available in the repository. Feel free to adapt it for your own projects — and remember, infrastructure as code isn't just about automation, it's about repeatability, auditability, and peace of mind.

Congratulations you did it. It looks good. This lab was successfully completed without any errors. See you in next articles. If you have any issues please let me know I will be happy to assist you. Stay tuned and learn together. If you find my article useful, please kindly like and share it.

Here is my code repo and IAC repo.

| Code Repo | Code |

|---|---|

| IAC Repo | IAC |