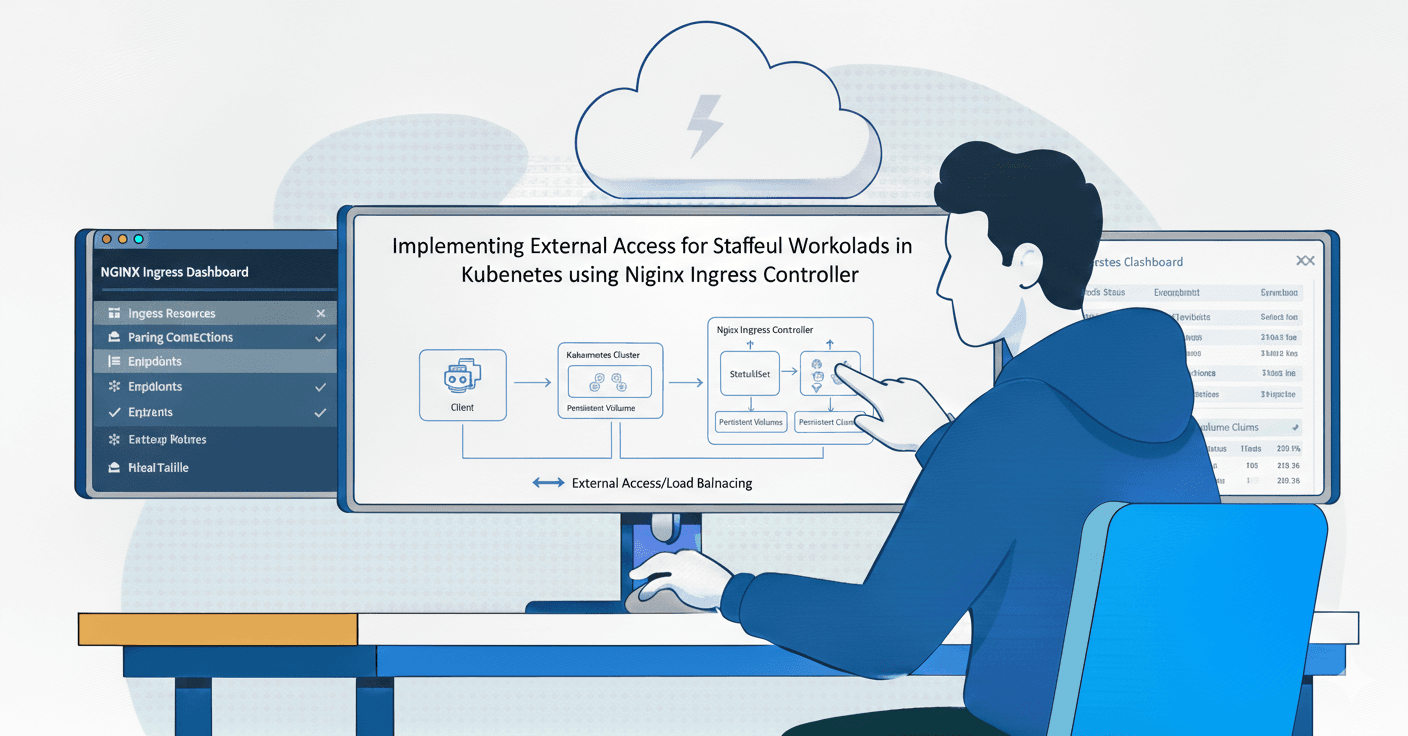

Bridging the Gap: Implementing External Access for Stateful Workloads in Kubernetes

I'm Zin Lin Htet. Who love to learn and share about Linux, Cloud, Docker and K8s. Currently working as a DevOps Engineer at one of the famous Fintech Company in Myanmar.

Today, we will learn how to set up a backend API container with PostgreSQL on Kubernetes. In this tutorial, you will learn how to write a multi-stage Docker file, install the MetalLB load balancer, set up the Rancher local path provisioner for storage, and install the NGINX Ingress controller.

Lab Purpose

I want to share my past Kubernetes experience in this lab. Disclaimer: In this lab, I created a simple backend CRUD API using Gemini AI. To be honest, I'm not a developer, so my backend code is not perfect.

The primary objective of this lab is to architect and deploy a production-ready SMS API on a bare-metal Kubernetes environment. This exercise demonstrates how to bridge the gap between internal cluster resources and external network accessibility without relying on managed cloud services.

If you don’t know how to setup Kubernetes Cluster. You can use below link for install and setup.

Here is the Kubernetes Cluster Installation

Here is my Code Repo

| Tools | Purpose of Use | Link |

| Docker | To package applications and their dependencies into a standardized unit called a container | https://www.docker.com/ |

| Docker Hub | To store the container images | https://hub.docker.com/ |

| Kubernets | To automate the deployment, scaling, and management of containerized applications, enabling, reliable, and efficient operations across clusters of machines | https://kubernetes.io/ |

| Helm | Helm is the package manager for Kubernetes, designed to simplify deployment, management, and scaling of complex applications by bundling YAML configuration files into reusable, versioned packages called Charts | https://helm.sh/ |

| MetalLB | It addresses the lack of native cloud-provider load balancers in on-premise environments by assigning IP addresses from a configured pool | https://metallb.io/ |

| Rancher Loacal path provisioner | To dynamically provision Persistent Volumes (PVs) using the local storage on each Kubernetes node | https://github.com/rancher/local-path-provisioner |

| Nginx Ingress Controller | Specialized load balancer and reverse proxy that manages external access to services within a Kubernetes cluster | https://github.com/kubernetes/ingress-nginx |

1. Multistage docker file

Why we need to do multistage docker file?

Multistage Docker builds are a game-changer for anyone tired of bloated, heavy container images. In a nutshell, they allow you to use multiple FROM statements in a single Dockerfile, effectively separating the build environment from the runtime environment.

Here is why they are considered a best practice.

Significant Size Reduction

In a standard Dockerfile, everything you use to compile your app (compilers, build tools, header files) remains in the final image. With multistage builds, you "leave the trash behind." You compile your app in the first stage and then copy only the final executable into a tiny, production-ready image.

Enhanced Security

A smaller image has a smaller attack surface. By removing package managers (like apt or npm), source code, and shell utilities from your final production image, you give hackers fewer tools to work with if they manage to gain access to your container.

Simplified CI/CD Pipelines

Before multistage builds, developers often had to maintain two separate Dockerfiles (e.g., Dockerfile.build and Dockerfile.production) and use shell scripts to move artifacts between them. Multistage builds allow you to keep all that logic in one file, making your CI/CD pipeline much cleaner.

Here is my Dockerfile using multistage.

# Builder stage

FROM node:22-alpine AS builder

WORKDIR /app

RUN apk add --no-cache openssl libc6-compat curl

COPY package*.json ./

COPY prisma ./prisma/

RUN npm install

RUN npx prisma generate

# Runner stage

FROM node:22-alpine AS runner

WORKDIR /app

RUN apk add --no-cache openssl libc6-compat curl

COPY --from=builder /app/node_modules ./node_modules

COPY --from=builder /app/prisma ./prisma

# Copy application source

COPY package*.json ./

COPY server.js ./

COPY entrypoint.sh ./

RUN chmod +x entrypoint.sh

EXPOSE 3000

ENTRYPOINT ["/bin/sh", "./entrypoint.sh"]

Clone github repo to my local computer.

git clone https://github.com/frandi-devops-learn/demo-sms-api.git

cd demo-sms-api/

git branch -a

git checkout -b devops

git pull origin devops

2. Docker image push to DockerHub

After cloning the repo, we need to build this Docker image and push it to Docker Hub. In my lab, I use the public Docker Hub to store my container image. In this lab, I build Docker images for two types of architecture, so you can run either arm64 or amd64.

docker buildx build --platform Linux/arm64,Linux/amd64 -t zinlinhtetdevops/demo-sms-api:latest . --push

You can pull my ready to use container image.

docker pull zinlinhtetdevops/demo-sms-api:latest

docker images

3 . Installation of Helm

curl -fsSL -o get_helm.sh https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-4

chmod 700 get_helm.sh

sudo ./get_helm.sh

4. Installation of MetalLB

I use helm for install MetalLB on my kubernetes node. I create namespace for MetalLB.

helm repo list

helm repo add metallb https://metallb.github.io/metallb

helm install metallb metallb/metallb --create-namespace --namespace metallb-system

Now you can see MetalLB pod is successfully run.

Here is a breakdown of what this configuration does and a small missing piece you might need.

4.1. IPAddressPool

This is the "bucket" of IP addresses that MetalLB is allowed to hand out.

Range: 192.168.1.200 - 192.168.1.205.

Purpose: When you create a Service with type: LoadBalancer, MetalLB will grab one of these 6 IPs and assign it to that service.

Crucial Requirement: These IPs must not be in use by your router's DHCP server. If your router hands out .200 to your phone, and MetalLB hands it to your API, you'll have an IP conflict.

4.2. L2Advertisement

This tells MetalLB how to announce those IPs to your local network.

Layer 2 (L2): This is the simplest mode. It uses standard ARP (Address Resolution Protocol).

How it works: One of your nodes will basically shout to your router: "Hey, I am now the owner of 192.168.1.200!" The router then sends all traffic for that IP to that node.

kubectl create -f metallb.yml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool

namespace: metallb-system

spec:

addresses:

- 192.168.1.200-192.168.1.205

---

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: advertisement

namespace: metallb-system

spec:

ipAddressPools:

- first-pool

5. Installation of Rancher Local path provisioner

In a local Kubernetes environment (like K3s, Minikube, or Kind), managing storage can be frustrating. By default, Kubernetes doesn't automatically "give" you storage just because you ask for a Persistent Volume Claim (PVC). You usually have to manually create a Persistent Volume (PV) first.

The Rancher Local Path Provisioner solves this by providing Dynamic Provisioning for local storage.

Without this provisioner, if you want to deploy a database like MySQL or PostgreSQL, you have to:

Manually create a directory on your laptop/server.

Manually write a YAML for a Persistent Volume pointing to that path.

Then create your Persistent Volume Claim.

With the Local Path Provisioner, you skip steps 1 and 2. You just ask for a PVC, and the provisioner automatically creates a unique folder on your host machine and handles the PV creation for you.

Install the rancher local path provisioner using kubectl command.

kubectl apply -f https://raw.githubusercontent.com/rancher/local-path-provisioner/master/deploy/local-path-storage.yaml

kubectl get namespace

kubectl get all -n local-path-storage

6. Create Namespace

In Kubernetes, a Namespace is essentially a "virtual cluster" within your physical cluster. If you only have one small app, you might not notice the need for them, but as soon as your project grows, namespaces become your best friend for organization and security. And then I chage the default namespace to demo-sms namespace.

kubectl create namespace dmeo-sms

kubectl get namespace

kubectl config set-context --current --namespace demo-sms

kubectl config get-contexts

7. Deploy PostgreSQL database on Kubernetes

It’s time to deploy the PostgreSQL database. In this lab, you need to deploy a StatefulSet for the database. Even if the database pod restarts, your data needs to exist and be persistent.

In Kubernetes, a Deployment is great for "stateless" things like your web app or an API where it doesn't matter which pod is which. But a database like PostgreSQL is "stateful"—it cares deeply about its identity and its data. Using a StatefulSet provides the specific "guardrails" that a database needs to run safely without corrupting data.

The first step I need to create a Persistent Volume Claim to attach a vloume in PostgreSQL database container. So let’s create a pvc. Here is the pvc.yml file.

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: db-pvc

namespace: demo-sms

spec:

accessModes:

- ReadWriteOnce

volumeMode: Filesystem

resources:

requests:

storage: 10Gi

storageClassName: local-path

kubectl get pvc

Before creating the database, we need to create a secret for the database password. I don't want to use a hardcoded value in the PostgreSQL StatefulSet. So how to encode you database password. I will show you.

echo -n "yourpassword" | base64

kubectl create -f sec.yml

kubectl get secrets

apiVersion: v1

data:

postgres-password: ZGJhZG1pbjEyMw== ( Put your base64 encoded database password )

kind: Secret

metadata:

name: postgres-secret

namespace: demo-sms

So let’s deploy PostgreSQL database StatfulSet container.

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: demo-sms-db

namespace: demo-sms

spec:

serviceName: "demo-sms-db"

replicas: 1

selector:

matchLabels:

app: demo-sms-db

template:

metadata:

labels:

app: demo-sms-db

spec:

containers:

- name: demo-sms-db

image: postgres:16-alpine

env:

- name: POSTGRES_DB

value: "uatdb"

- name: POSTGRES_USER

value: "dbadmin"

- name: POSTGRES_PASSWORD

valueFrom:

secretKeyRef:

name: postgres-secret

key: postgres-password

ports:

- containerPort: 5432

resources:

requests:

cpu: "250m"

memory: "512Mi"

volumeMounts:

- mountPath: /var/lib/postgresql/data

name: db-storage

subPath: postgres-data

volumes:

- name: db-storage

persistentVolumeClaim:

claimName: db-pvc

kubectl create -f stateful-set.yml

kubectl get pods

kubectl get pvc

kubectl get statefulset

Once the StatefulSet is running properly, I expose my PostgreSQL service using the ClusterIP type since we only need to access this database within the cluster. However, I also want to connect to my database from outside the cluster, so I expose it again using the NodePort type. I will show you how to expose a service with both ClusterIP and NodePort types.

This is the ClusterIP type.

apiVersion: v1

kind: Service

metadata:

labels:

app: demo-sms-db

name: demo-sms-db-svc

namespace: demo-sms

spec:

ports:

- port: 5432

protocol: TCP

targetPort: 5432

selector:

app: demo-sms-db

type: ClusterIP

status:

loadBalancer: {}

kubectl get svc

kubectl create -f cluster-svc.yml

kubectl get svc

This is the NodePort type. I use the node port number 32110.

apiVersion: v1

kind: Service

metadata:

labels:

app: demo-sms-db

name: demo-sms-db-extenal

namespace: demo-sms

spec:

ports:

- port: 5432

protocol: TCP

targetPort: 5432

nodePort: 32110

selector:

app: demo-sms-db

type: NodePort

status:

loadBalancer: {}

kubectl create -f node-svc.yml

kubectl get svc

I use pgAdmin4 to connect my Database. 192.168.1.108 is my Kubernetes IP. You need to use the NodePort number, not the actual PostgreSQL port. Then you can connect your database from pgAdmin.

You can see there are no tables because the Prisma database migration has not been applied. But after the backend API container is deployed, you will see the tables.

8. Deploy Backend API on Kubernetes

After the database is successfully deployed, we will deploy the backend API on Kubernetes. In this deployment, I set the replica count to 2 and specify resource requests and limits. I also use an init container to make sure the database is ready before the web app tries to connect.

Explanation of the deployment file

namespace: demo-sms : isolating this app into its own virtual cluster.

strategy: RollingUpdate : This ensures zero downtime.

maxSurge : 1 : Kubernetes will start 1 new Pod before killing an old one.

maxUnavailable: 0 : Kubernetes will never have fewer than 2 Pods running during an update.

Requests : What the Pod is guaranteed to get (0.25 CPU and 128MB RAM).

Limits : The maximum it can consume. If it tries to use more than 256MB RAM, Kubernetes will kill it (OOMKilled) to protect the rest of the cluster.

This is the deploy.yml file.

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: demo-sms-api

name: demo-sms-api

namespace: demo-sms

spec:

replicas: 2

selector:

matchLabels:

app: demo-sms-api

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

template:

metadata:

labels:

app: demo-sms-api

spec:

initContainers:

- name: wait-for-db-up

image: busybox:1.28

command: ['sh', '-c', "until nc -zv demo-sms-db-svc 5432; do echo 'Waiting for database...'; sleep 2; done"]

containers:

- image: zinlinhtetdevops/demo-sms-api:latest

name: demo-sms-api

ports:

- containerPort: 3000

env:

- name: DB_HOST

value: "demo-sms-db-svc"

- name: DB_USER

value: "dbadmin"

- name: DB_NAME

value: "uatdb"

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: postgres-secret

key: postgres-password

resources:

requests:

memory: "128Mi"

cpu: "250m"

limits:

memory: "256Mi"

cpu: "500m"

readinessProbe:

httpGet:

path: /health

port: 3000

initialDelaySeconds: 5

periodSeconds: 10

livenessProbe:

httpGet:

path: /health

port: 3000

initialDelaySeconds: 15

periodSeconds: 20

kubectl get pods

kubectl create -f deploy.yml

kubectl get deployments

kubectl get pods

kubectl logs -f pods/demo-sms-api-7bb5bc6c97-dtdh9 -c wait-for-db-up

kubectl logs -f pods/demo-sms-api-7bb5bc6c97-dtdh9

Now that we have successfully deployed the backend API, it's time to expose the backend service using the ClusterIP type. However, I want to use a domain name, like api.demo-sms.local, so I will configure the Nginx Ingress Controller.

Why do we need to use the Nginx Ingress Controller?

With MetalLB alone, each service you want to expose (API, Frontend, Admin Dashboard) needs its own unique IP from your 192.168.1.200-205 range. You will run out of IPs very quickly. With Ingress, you use one LoadBalancer IP (e.g., 192.168.1.200) for the NGINX Controller, and it routes traffic to many different services based on the URL.

How it works with MetalLB

In my local setup, the relationship looks like this:

MetalLB provides a stable IP (e.g., 192.168.1.200) to the NGINX Ingress Service.

My Domain Name (or /etc/hosts file) points to that IP.

NGINX Ingress looks at the incoming request and sends it to my demo-sms-api Pod.

So, install and set up the Nginx Ingress Controller on Kubernetes. First we need to expose our backed api service. API service should now be a ClusterIP (internal only), because the Ingress will handle the external traffic.

Here is the svc-cluster.yml file.

apiVersion: v1

kind: Service

metadata:

labels:

app: demo-sms-api

name: demo-sms-api-cluster

namespace: demo-sms

spec:

ports:

- port: 80

protocol: TCP

targetPort: 3000

selector:

app: demo-sms-api

type: ClusterIP

kubectl create -f svc-cluster.yml

kubectl get svc

Let’s start to install Nginx Ingress Controller.

kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.8.2/deploy/static/provider/cloud/deploy.yaml

kubectl get namespace

kubectl get all -n ingress-nginx

kubectl get svc -n ingress-nginx

Now you can see that MetalLB has assigned an IP (192.168.1.200) to the Nginx Ingress Controller load balancer.

Now create the Ingress resource. This is the routing rule that tells NGINX to send traffic to API. Here is the ingress.yml file.

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: demo-sms-ingress

namespace: demo-sms

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

ingressClassName: nginx

rules:

- host: api.demo-sms.local

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: demo-sms-api-cluster

port:

number: 80

kubectl create -f ingress.yml

kubectl get ingress -n demo-sms

It's time to access our backend API through the Nginx Ingress Controller. However, you need to map the IP address to the domain name in the /etc/hosts file. Without this, you won't be able to access it using the domain name in local.

sudo vi /etc/hosts

192.168.1.200 api.demo-sms.local

9. Testing with Postman

Now open your browser and enter the domain name. You should see the backend API working properly.

I use Postman to test the basic CRUD operations on my backend API.

By completing this lab, you will have established a robust, scalable, and externally accessible API infrastructure that adheres to Kubernetes best practices for stateful applications and on-premise networking.

Congratulations you did it. It looks good. This lab was successfully completed without any errors. See you in next articles. If you have any issues please let me know I will be happy to assist you. Stay tuned and learn together. If you find my article useful, please kindly like and share it.